The Revenue Signal — Issue 03

Last week, PwC published the clearest picture yet of who is actually winning with AI — and what separates them from the rest. The numbers are not encouraging for the majority.

Here is what you need to know.

The signal

The 20% aren't using more AI. They're using it differently.

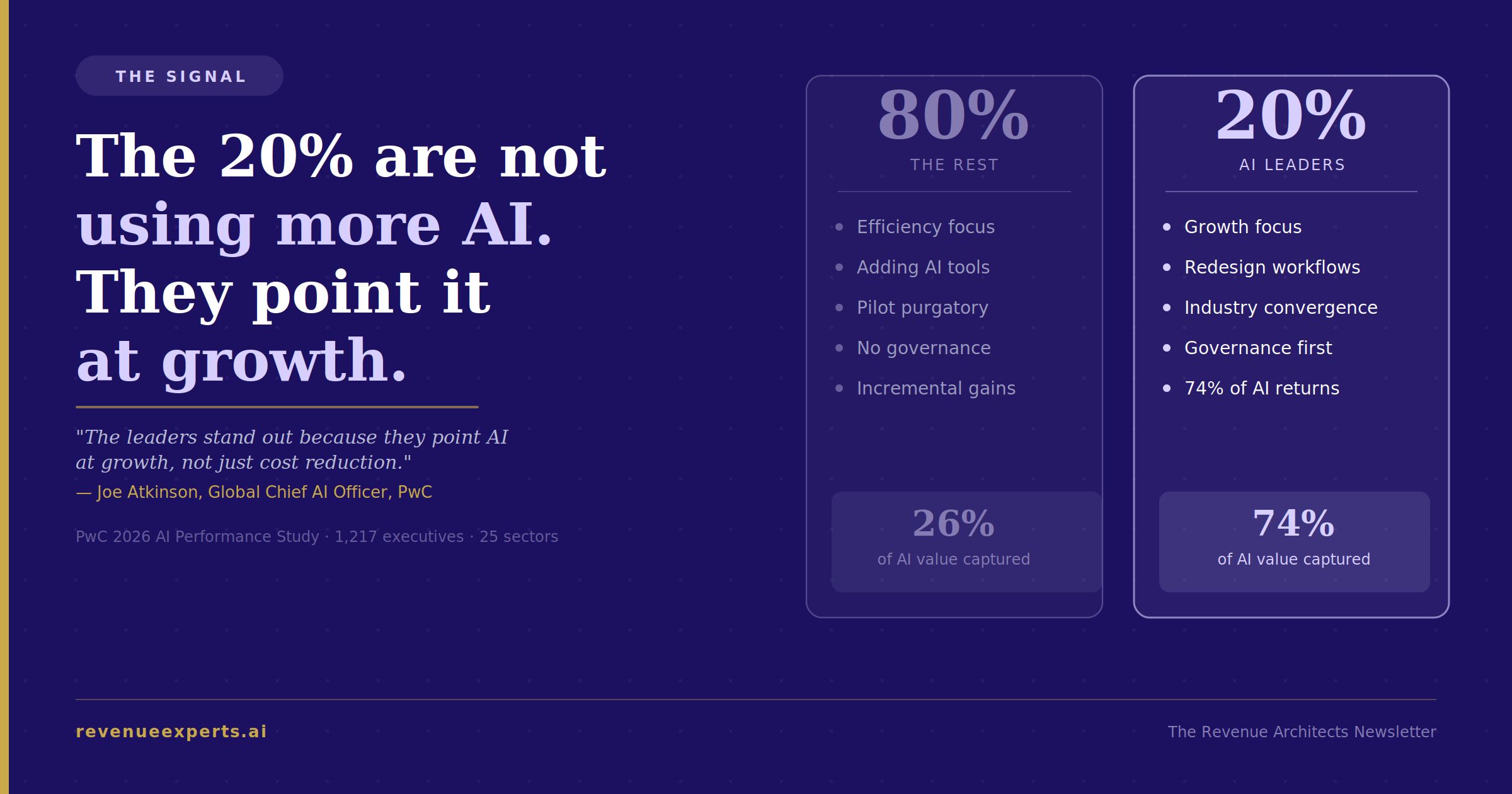

PwC's 2026 AI Performance Study surveyed 1,217 senior executives, director level and above, across 25 sectors worldwide. The finding is direct: 74% of AI's economic returns are going to just 20% of organizations.

The other 80% are not losing because they lack access to AI. Most of them have more pilots running than their teams can track. The gap is not a tool gap. It comes from what those tools are pointed at.

Companies inside the 20% are 2.6 times more likely to report that AI is improving their ability to reinvent their business model. They are two to three times more likely to use AI to identify growth opportunities from industry convergence, enter markets adjacent to their core, build new revenue lines, and form partnerships outside their traditional sector.

That last part carries the most weight. PwC identifies industry convergence as the single strongest factor in AI-driven financial performance, ahead of efficiency gains. The companies using AI to work faster are getting some value. The companies using AI to open new revenue are pulling away from the field.

The automation picture is just as wide. AI leaders are increasing the number of decisions made without human intervention at 2.8 times the rate of their peers. They run AI across multiple coordinated tasks within guardrails at 1.8 times the rate of others, and their employees are twice as likely to trust AI outputs. That trust is a governance outcome, not a cultural one. AI leaders are 1.7 times more likely to have a Responsible AI framework in place and 1.5 times more likely to have a cross-functional AI governance board.

PwC's Global Chief AI Officer, Joe Atkinson, put it plainly: "The leaders stand out because they point AI at growth, not just cost reduction, and back that ambition with the foundations that make AI scalable and reliable."

Without a shift in strategic framing, this gap will widen. The 20% are learning faster, scaling faster, and making decisions at a speed the 80% cannot match with current approaches.

The question every revenue leader needs to answer right now: when you list your active AI initiatives, how many are pointed at efficiency, and how many are pointed at growth?

That ratio tells you which side of this split you are on.

The build

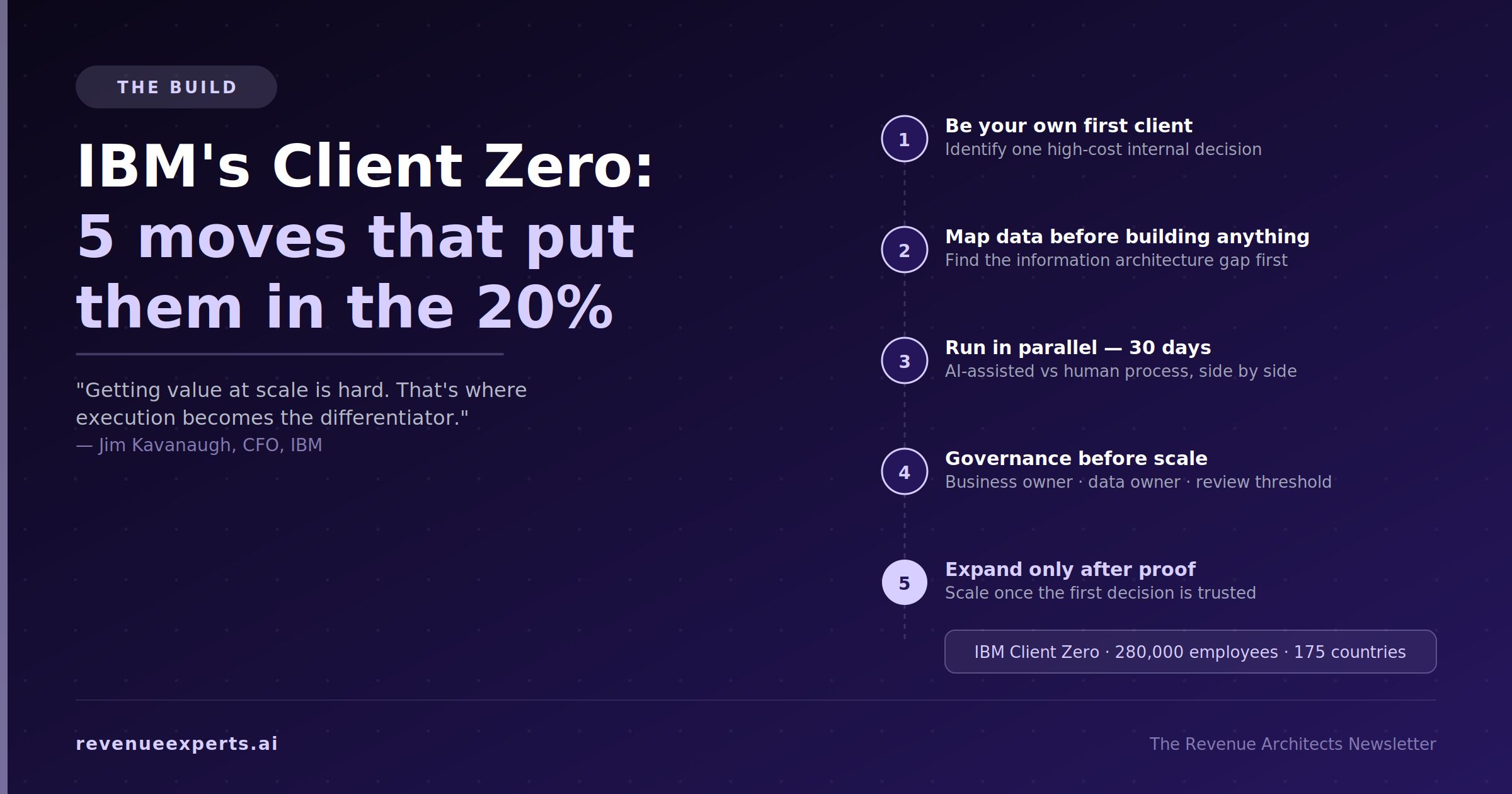

IBM's Client Zero: the five moves that put them in the 20%

SiliconANGLE's coverage of Kavanaugh's appearance at the New York Stock Exchange this week captured the core point: deploying AI is relatively easy, but getting value at scale is hard. That is where most organizations struggle and where execution becomes the differentiator.

IBM's answer to that problem is a program they call "Client Zero." Before any AI capability goes to market, IBM deploys it on themselves, across 280,000 employees in 175 countries. They operate as the first customer of their own AI strategy. Here is what that looks like step by step and how you apply the same logic.

Step 1: IBM chose to be their own test environment, before touching external markets

IBM did not start by asking "how do we sell AI solutions?" They started by asking, "Where does our own organization make decisions slowly, expensively, or inconsistently?" That question, applied internally first, is what Client Zero is. It forced IBM to identify specific, measurable decision points rather than broad capability investments.

Your version: List three decisions your organization makes today that are slow, inconsistent, or require more people than they should. Not processes — decisions. Which accounts to prioritize, whether a deal is truly active or quietly stalled, and when to escalate a customer risk before it becomes a loss. Pick the one where a wrong call costs you the most.

Step 2: IBM mapped the data infrastructure before building anything

Kavanaugh's point about getting value at scale is a data architecture point as much as an AI one. IBM, before deploying AI on any internal workflow, had to answer the following: What data does this decision actually require? Where does it live? And how reliably does it get there? At IBM's scale, this is a formal exercise. At your scale, it is a whiteboard session.

Your version: for the decision you picked in Step 1, write down every data source a person currently uses to make it well. CRM history, call notes, email patterns, product usage signals — whatever actually goes in. Then identify which of those sources are clean and accessible versus manually assembled or frequently missing. That gap is your real problem. AI deployed on top of inconsistent data does not fix the decision. It makes a confident wrong call instead of a hesitant one.

Step 3: IBM ran AI in parallel with the human process, not instead of it

“Client Zero” means IBM ran AI-assisted workflows alongside existing human workflows inside their own organization. They were not replacing the human process on day one. They were measuring whether the AI-assisted version produced better outcomes, faster outcomes, or neither. That comparison is only possible if you run both simultaneously for long enough to see results.

Your version: build the smallest deployable version of your target decision and run it in parallel with how your team currently makes that call. Do this for 30 days. At day 30, you have one question to answer: did the AI-assisted version produce a better outcome, the same outcome faster, or a worse outcome? If you cannot measure the outcome at all, you chose the wrong starting decision. Go back to Step 1.

Step 4: IBM built governance as part of the CFO mandate, not as a compliance afterthought

SiliconANGLE's coverage described the CFO role at IBM as shifting from financial guardian to enterprise architect. Part of that shift is owning AI governance. At IBM, this means a defined accountability structure: who owns the outcome of an AI-assisted decision, who monitors the data feeding it, and what triggers a review when the AI's outputs start drifting from expected behavior.

PwC's data confirms this is not optional. AI leaders are 1.7 times more likely to have a Responsible AI framework and 1.5 times more likely to have a cross-functional governance board. Those structures are what allow them to increase autonomous decisions at 2.8 times the rate of peers without those decisions going wrong.

Your version: before you scale anything out of the 30-day parallel test, assign three roles. A business owner who is accountable for outcomes. A data owner who monitors the inputs. A defined threshold — a number, not a feeling — that triggers a human review. At IBM's scale this is a committee. At your place, it is three named people and a standing meeting cadence.

Step 5: IBM expanded only after proving the internal version worked

Client Zero exists because IBM understood that credibility in the market comes from having done the thing, not from having a plan to do the thing. They bring AI solutions to clients after running them on 280,000 of their own people. That sequence — internal proof before external application — is also why their employees are confident using the outputs. The trust is earned, not assumed.

Your version: once your 30-day parallel test shows a measurable improvement, and your governance structure is in place, you have earned the right to scale to a second team or a second decision type. Not before. The 20% PwC measured are two to three times more likely to pursue industry convergence opportunities, but those opportunities become visible only after the infrastructure for spotting them is working. That infrastructure starts with one decision, proven, governed, and trusted.

The move

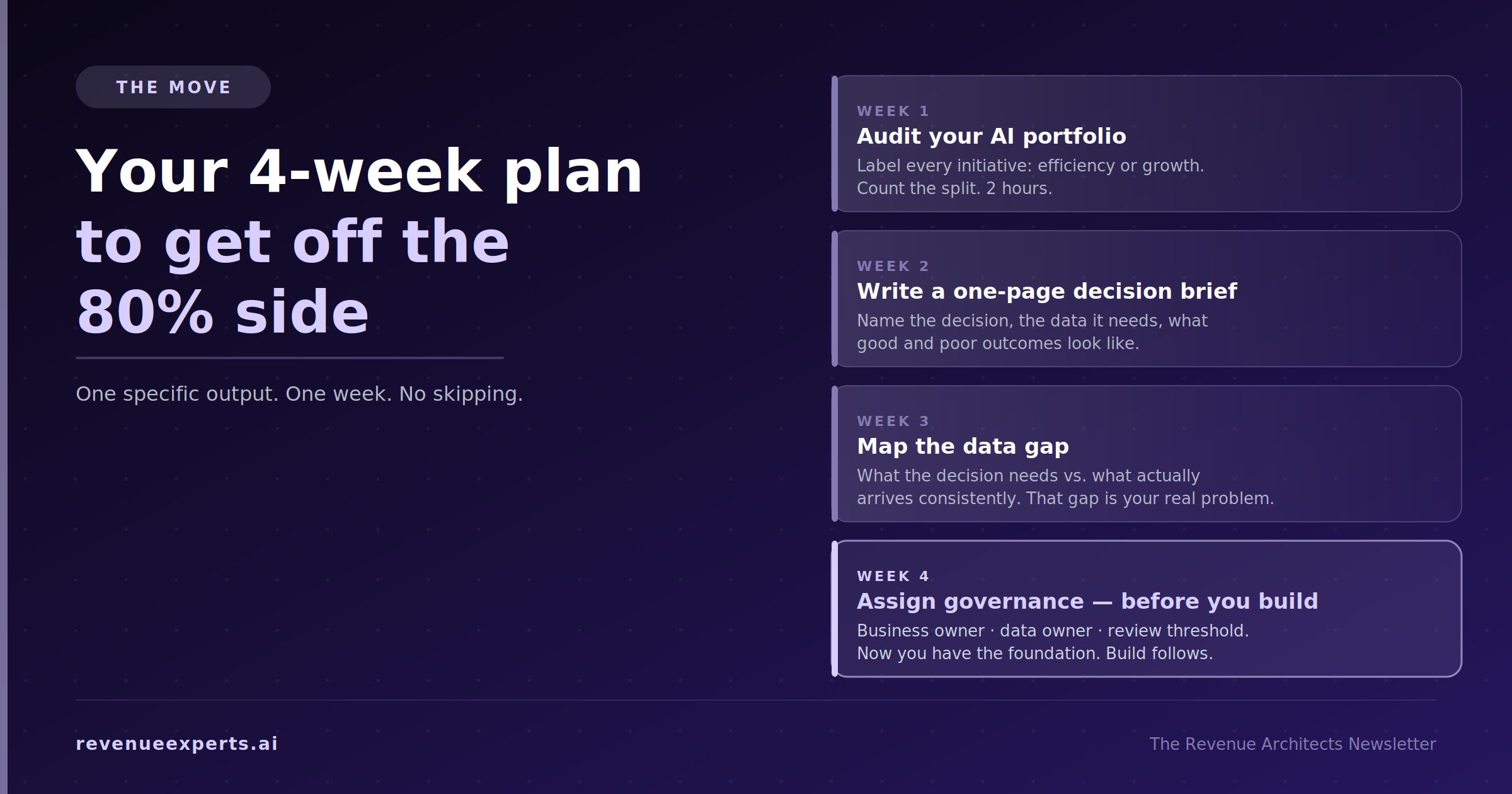

Your four-week action plan to get off the 80% side

This is not a strategy exercise. It is a sequence of specific actions, one per week, that moves you from having AI tools to having AI decisions.

Week 1 — Audit your current AI portfolio

Pull every AI initiative currently active in your organization. Include vendor tools, internal builds, anything past proof-of-concept, and anything you are paying for monthly. Assign each one a single label: efficiency (existing work faster or cheaper) or growth (new revenue, new market, or new decision capability you do not have today).

Count the split. If your list is entirely efficiency, you are in the 80%. If you have one or two items in the growth column, note what they are and whether they have a named owner and a measurable outcome attached to them. Most will not.

This audit does not take a day. It takes two hours if you have the list in front of you.

Week 2 — Identify your one growth decision

Using the IBM framework from The Build, identify the single decision in your revenue system that, if made faster and more accurately, would have the most direct impact on growth. It's not the easiest decision to automate but it is the most valuable one to get right.

Write a one-paragraph brief for it: what the decision is, who currently makes it, what data goes into it today, and what a good outcome looks like versus a poor one. Share it with one other person in your organization and ask them to push back on whether it is actually measurable. If they cannot tell you how you would know the AI-assisted version is working, refine the brief until they can.

Week 3 — Map the data and find the gap

For the decision you identified in Week 2, follow IBM's Step 2 exactly. List every data source that should feed that decision. Then check each one: is it accessible, is it current, and does it arrive consistently or only when someone manually assembles it?

You are looking for the gap between the data the decision needs and the data that actually gets used today. That gap is almost always why the decision is slow or inconsistent in the first place. Document it. You now have a data brief, not an AI brief. That is the correct starting point — IBM learned this the hard way at scale, and you can skip that part.

Week 4 — Assign governance before you build

Before any tool gets selected or any build begins, name three people: the business owner, the data owner, and the review threshold. Write down what number or signal would cause you to pause the AI-assisted decision and return to human review. It does not need to be sophisticated. It needs to exist before you deploy, not after something goes wrong.

Once you have these four outputs — the portfolio audit, the one-page decision brief, the data gap map, and the three-role governance assignment — you have what IBM had before they scaled Client Zero. You have the foundation. The build that follows it will be faster and more trustworthy than any pilot launched without it.

If at the end of Week 4 you want a structured way to turn the decision brief into a full opportunity map with build specs and a revenue case, that is what the Opportunity Architecture Sprint produces. If you want to understand where your current AI foundations compare to what the 20% have built, the AI Signal Benchmark gives you that read before you commit to a build direction.

The gap between the 20% and the 80% is not a technology gap. It is a sequencing gap. The 20% did the groundwork first. Start there.

Until next week,

Elizabeta Kuzevska Co-Founder, Revenue Experts AI, revenueexperts.ai | onlinemarketingacademy.ai Connect on X: @ekuzevska · Connect on LinkedIn · Forward to a colleague—and

Sources:

PwC 2026 AI Performance Study, April 13, 2026: https://www.pwc.com/gx/en/news-room/press-releases/2026/pwc-2026-ai-performance-study.html

SiliconANGLE, April 14, 2026: https://siliconangle.com/2026/04/14/leadership-enterprise-ai-shift-ibmtransformationedgeseries/