Hi,

Greg Beltzer, Chief Customer Officer for AI and Agentforce at Salesforce, told a room of B2B revenue leaders at SaaStr AI London earlier this year that Salesforce's own Agentforce rollout had not gone smoothly. The data was not clean. Processes were not ready. Workflows had to be rewritten while the deployment was already running.

This is Salesforce. The company that invented CRM software. If their data were not in the right shape for AI agents, the odds that yours are not high.

That is not a technology problem. It is a data problem. And it is sitting in your CRM right now.

The Signal

AI agents in B2B sales: real returns, real failure rates

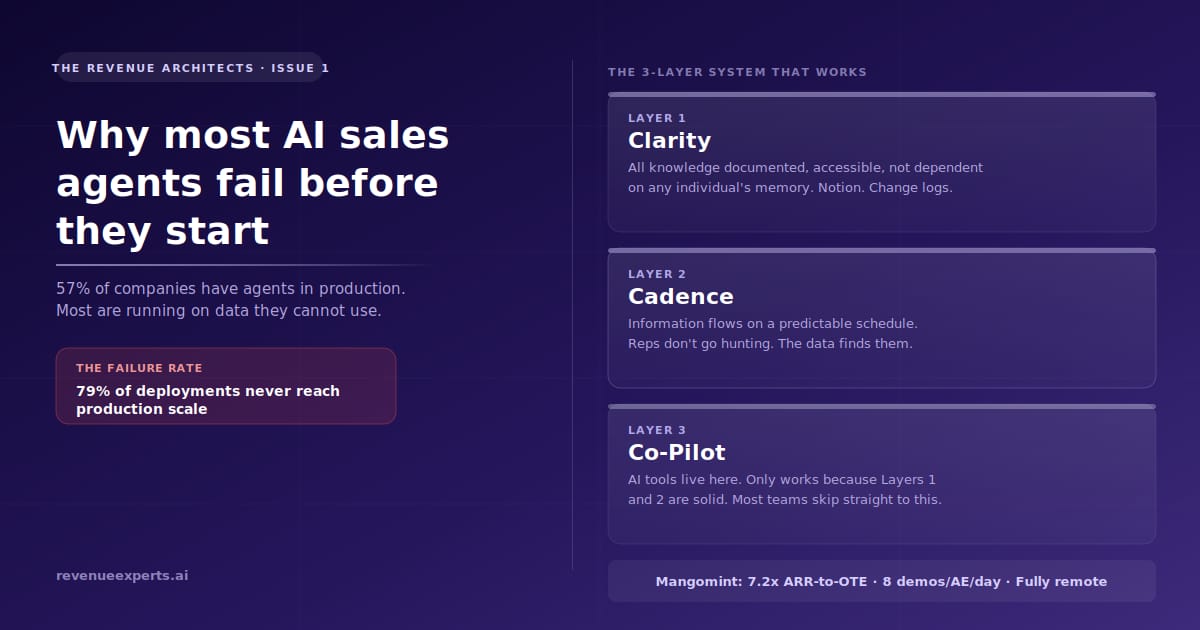

Fifty-seven percent of companies now have AI agents running in production, according to G2's 2025 AI Agents Insights Report, which surveyed more than 1,000 B2B decision-makers. The average ROI from agentic deployments is 171%, with US-based companies averaging 192%, according to OneReach.ai's 2026 market analysis.

Those numbers are real. The deployments producing them are the exception.

Only 21% of enterprises have reached enterprise-wide AI workflow production, according to Stonebranch's 2026 research of 402 IT automation professionals. The other 79% have agents running somewhere — in a pilot, in one team, in one workflow — but not integrated into the revenue system as a whole. Eighty-nine percent of those companies run multiple automation platforms with no unified orchestration layer. The agents exist. They just do not talk to each other, and they draw on incomplete data.

The gap between 57% with agents in production and 21% with production-grade deployment at scale tells you where most companies actually are: running something, getting partial value, and unclear on why the ROI is not matching the projections.

The answer is almost always the same. The data was not ready before the agents went live.

Here is what bad sales data looks like before it becomes a problem. Deals close with no notes. Call logs are incomplete or missing. Your CRM has fewer data points than your marketing automation platform, even though it receives data from both. Your top rep has the highest close rate on the team and almost nothing is recorded in the system because they work from memory, text messages, and instinct. None of this is unusual. Most B2B sales organizations look like this before they deploy AI.

Here is what it looks like after. The agent qualifies leads on incomplete signals, flags the wrong accounts as high-intent, and generates follow-up sequences that miss context the rep knew from memory but never recorded. The rep stops trusting the agent within six weeks. The pilot ends. The conclusion is that the technology did not work.

The technology worked. The foundation was not there.

The Build

How Marshelle Mooney built a 7.2x ARR-to-OTE sales organization at Mangomint by doing it in the right order

Mangomint is a vertical SaaS for salons and spas, with a roughly $4,000 ACV and a five-day sales cycle. Marshelle Mooney, VP of Sales, runs eight demos per AE per day, fully remote. Her top performers close 35 logos a month against a 20-logo quota.

A 7.2x ARR-to-OTE ratio is uncommon across SaaS segments. In SMB, it is almost unheard of—SaaStr notes that 3-5x is typical, and above 6x is exceptional. And in 2026, Mooney announced she is not raising the quota, even though she could, because the systems are not yet ready to support it.

That decision is worth stopping on. Most leaders with top reps closing 35 logos against a 20-logo quota would raise the bar. Mooney chose to use AI to bring the rest of the team up to that level instead. Her reasoning: if you raise quota before the systems are ready, you are asking reps to do more before you have given them what they need to do it. You create frustration, not results.

The system she built runs on three layers. The order is not optional.

Layer 1: Clarity

Everything a rep needs to do their job without asking a manager. Playbooks, objection handling, comp details, product updates. If someone has to send a Slack message to find an answer, this layer is failing. At Mangomint, all of it lives in Notion, accessible by voice dictation or a few keystrokes.

The clarity layer also includes a decision change log. Every meaningful change — product updates, comp plan shifts, process changes — is documented with a date. Access is controlled by role. The expectation is explicit: this is where you go when something changes. It is not the manager's job to personally inform every rep. It is the rep's job to check.

In a fast-moving market this pays off quickly. Comp changes do not become grievances. Product changes do not produce wrong demos. The knowledge exists somewhere that everyone can find it.

Layer 2: Cadence

When information flows, and how reliably. Every functional leader posts a weekly update. The minimum bar is one substantive update per week. Win rate changes surface in Slack before the weekly review. If that discipline holds, most follow-up questions disappear before they are asked.

The Cadence layer transforms information from something people have to chase into something that finds them. This matters for AI because agents work from information that exists in the system. If information is shared only in meetings and one-off messages, it is not in the system, and the agent cannot use it.

Layer 3: Co-Pilot

This is where the AI tools live. Momentum handles call intelligence and pushes summaries automatically into Notion and Salesforce without the rep touching a keyboard. Snowflake holds the data warehouse. Sigma sits on top for dashboards the leadership team actually builds and uses. Card failures route instantly to the rep who owns the account.

The Co-Pilot layer only works because the first two are solid. Mooney's point: automating a fragmented organization makes the fragmentation faster. Tools amplify what is already there. If what is already there is incomplete, inconsistent, and undocumented, the tools amplify that.

This is the reason 79% of companies with agents in production are not generating production-grade results. They built the third layer without the first two.

The full build is covered in SaaStr's writeup of Mooney's session. The vertical does not matter. The order does.

This layered approach to revenue system architecture is what our team builds with B2B companies through our Versatile Agent Arch—wonitects service.

The Move

The CRM data audit: four checks before you deploy any AI agent

This audit takes one afternoon. It answers the question Mooney discovered when she started rolling out AI tools at Mangomint: her Salesforce instance was a mess. Deals closed with no notes. No call logs. Her marketing automation platform had more data points than her CRM, even though the CRM was supposed to receive data from both.

Run these four checks in order. Record your results. They tell you which layer to build first.

Check 1: The closed deal audit

Pull the last 20 closed deals from your CRM — won and lost. For each one, count whether it has all three of the following: complete notes from at least one call, a call log or recording reference, and a contact record with job title and company name filled in.

Pass threshold: 15 of 20 deals meet all three criteria.

If you are below 15, your agent will qualify leads and generate follow-up sequences using data from a fraction of your actual deal history. Fix this before deploying anything.

Check 2: The platform comparison

Count the number of populated data fields in your CRM for a sample of 10 active accounts. Then count the same in your marketing automation platform. Your CRM should have more, because it receives data from both systems and from your sales team's direct activity.

If your marketing platform has more populated fields, your reps are working deals outside the CRM: in email, on their phones, in memory, and none of that is accessible to an agent.

Check 3: The top rep dependency test

For your highest-performing rep: if they left the company this week, what percentage of their deal knowledge exists in the CRM? Not their pipeline — their knowledge. The signals they read, the objections they handle, and the language they use with specific account types.

If the answer is less than 50%, you have a person-dependent sales operation. Agents learn from what is in the system. If your best performance lives outside it, the agent learns from your median, not your ceiling.

Check 4: The information flow test

Ask three reps, one junior, one mid-level, and one senior, the same question: where do you go when something changes in the company, and you need to know about it?

If the answers differ, or if any of them say "I ask my manager" or "it comes up in the weekly call," you do not have a functioning Clarity or Cadence layer. Information flow is person-dependent and inconsistent. An agent in that environment will miss context that reps pick up informally.

What your results mean

Pass all four: you are ready to evaluate CoPilot layer tools. Your foundation is solid enough to amplify.

Fail one or two: identify which layer is missing, Clarity or Cadence, and build that before buying any agent tooling. A week of documentation work will yield more ROI than a month spent configuring agents on incomplete data.

Fail three or four: fixing the data is the project. Not the agent. The Mangomint results came from building in order. The 79% failure rate comes from skipping to the third layer without the first two.

If the audit surfaces gaps and you want a structured build plan, the Opportunity Architecture Sprint turns that picture into a 48-hour decision memo with a build sequence your leadership team can act on.

That is Issue 1. The pattern holds across company size and vertical: build the foundation before the automation, or the automation exposes the gap.

Reply if something here landed differently than you expected. I read everything.

Elizabeta Kuzevska Co-Founder, Revenue Experts AI revenueexperts.ai

Sources:

G2 AI Agents Insights Report, 2025 — 1,000+ B2B decision-makers

Stonebranch Automation Research, 2026 — 402 IT automation professionals

You received this because you requested the Revenue Architects briefing. Unsubscribe